|

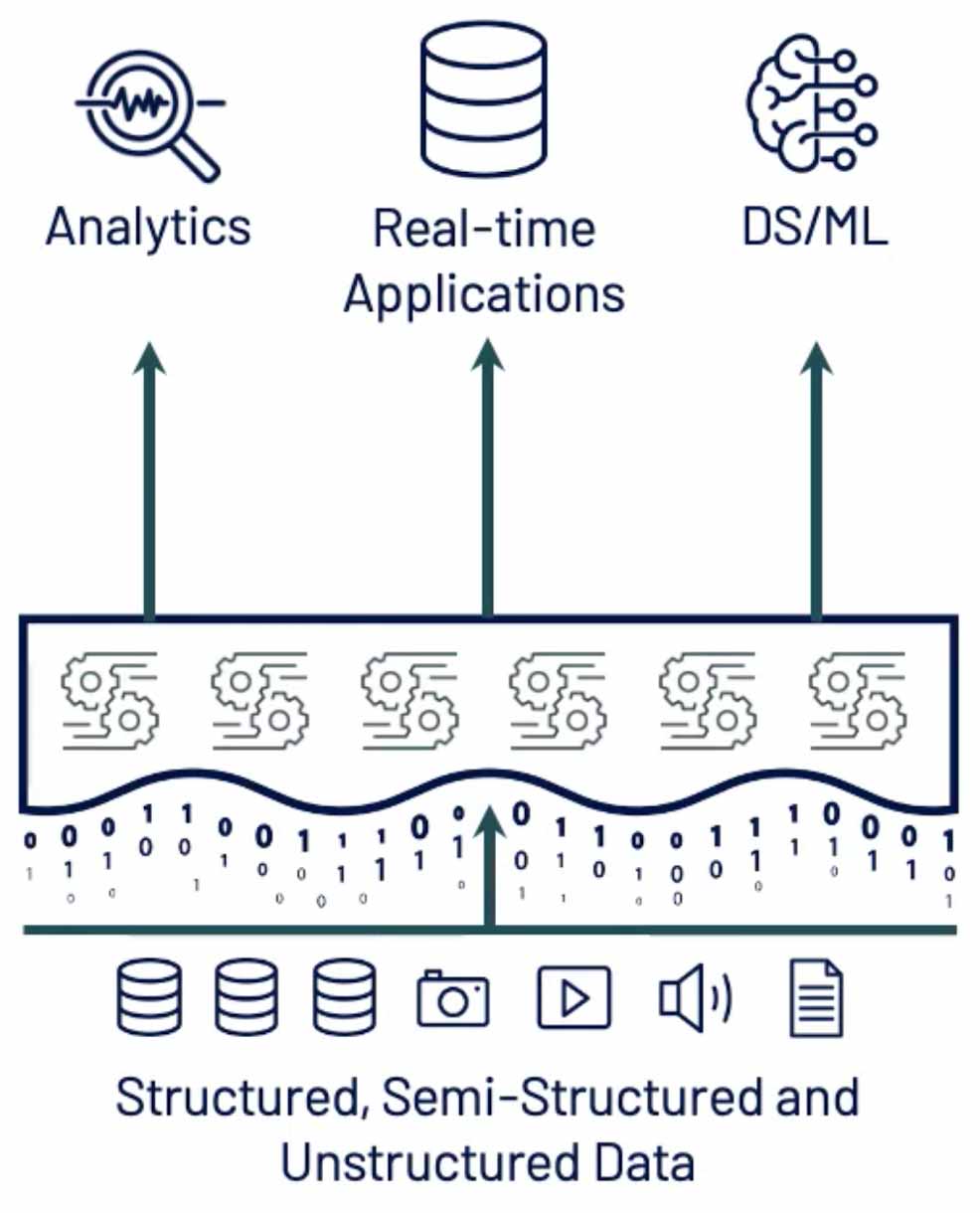

12/21/2023 0 Comments Sql lakehouse Notebooks: Data engineers can use the notebook to write code to read, transform and write directly to Lakehouse as tables and/or folders.

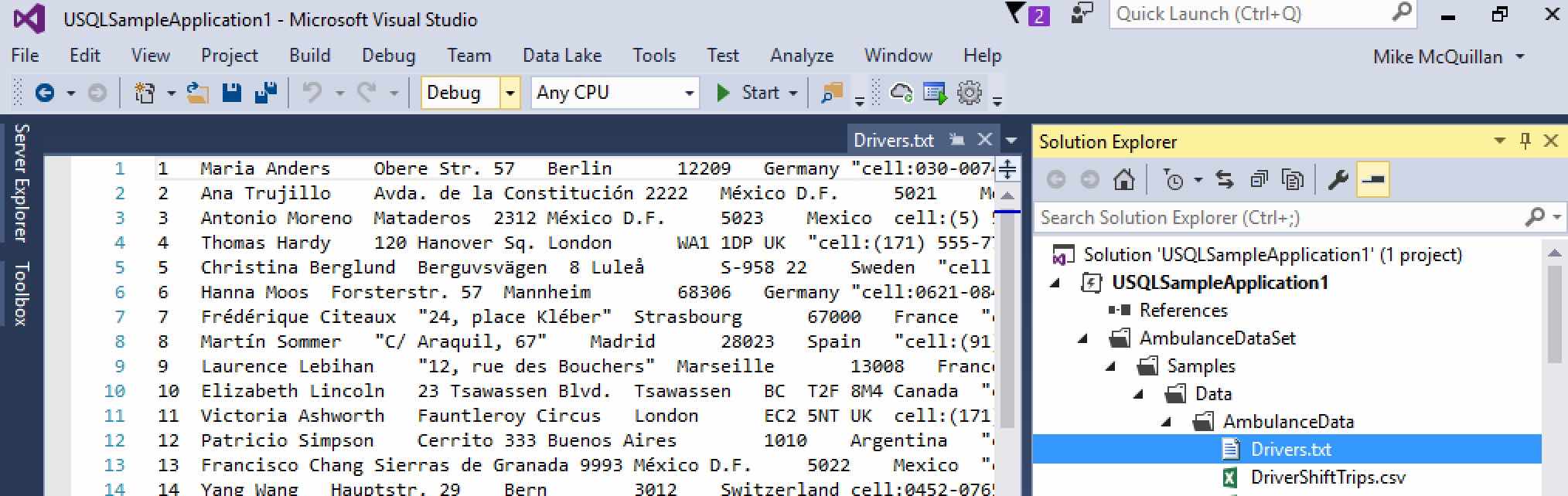

Learn more about the explorer experience: Navigate the Fabric Lakehouse explorer. You can load data in your Lakehouse, explore data in the Lakehouse using the object explorer, set MIP labels & various other things. The Lakehouse explorer: The explorer is the main Lakehouse interaction page. Interacting with the Lakehouse itemĪ data engineer can interact with the lakehouse and the data within the lakehouse in several ways: (Currently the only supported format is Delta table.) You can then reference the file as a table and use SparkSQL syntax to interact with the data. You can drop a file into the managed area of the Lakehouse and the system automatically validates it for supported structured formats, and registers it into the metastore with the necessary metadata such as column names, formats, compression, and more. The automatic table discovery and registration is a feature of Lakehouse that provides a fully managed file to table experience for data engineers and data scientists. Automatic table discovery and registration If you don't see your table, convert it to Delta format. Parquet, CSV, and other formats can't be queried using the SQL endpoint.

Note that only the tables in Delta format are available in the SQL endpoint. This new see-through functionality allows user to work directly on top of the Delta tables in the lake to provide a frictionless and performant experience all the way from data ingestion to reporting.Īn important distinction between default warehouse is that it's a read-only experience and doesn't support the full T-SQL surface area of a transactional data warehouse. The Lakehouse creates a serving layer by automatically generating a SQL endpoint and a default dataset during creation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed